Data orchestration tools automate, unify, and manage data workflows across sources to ensure seamless integration, consistency, and reliable, real-time data use.Looking for the best data orchestration tools for 2025? You have great options like

Businesses like yours now rely on these platforms more than ever. Just check out the numbers:

| Source | Statistic |

|---|---|

| IBM | 99% of enterprise developers are exploring or building AI agents |

| IBM | 79% of organizations report some level of agentic AI adoption |

| Gartner | 75% of organizations will be using AI orchestration tools by 2025 |

You can boost efficiency and automation by streamlining workflows with the right data orchestration tools. They automate data tasks, improve data quality, and connect all your sources in real time.

When you hear about a data orchestration platform, think of it as the conductor of your data workflows. It brings together all your data from different sources and makes sure everything moves in harmony. You do not have to worry about data sitting in silos or getting lost in translation. The platform automates data collection, transforms and integrates information, and keeps your data clean and consistent. This means you can trust your data and use it for real-time decisions.

Here’s what a data orchestration platform usually does for you:

You might wonder how this is different from traditional ETL tools. Take a look at this table:

| Aspect | Data Orchestration Platforms | Traditional ETL Tools |

|---|---|---|

| Scope | Oversees the entire data pipeline ecosystem | Handles specific data movement tasks |

| Workflow Management | Sophisticated workflow management | Linear workflow automation |

| Error Handling | End-to-end monitoring and recovery | Manages errors within specific processes |

| Job Scheduling | Advanced, event-driven scheduling | Basic, time-based scheduling |

With a data orchestration platform, you get more control and flexibility over your data workflows.

In 2025, you will see data orchestration tools become even more important. Businesses want to move faster and smarter. The demand for advanced AI and automation is growing in every industry. By 2025, experts predict that 80% of organizations will switch from old automation tools to modern service orchestration platforms. Half of all companies will develop AI orchestration to get the most out of their AI investments.

The market for these platforms is booming. Some reports say the AI orchestration market could reach $12.9 billion in 2025, growing at nearly 20% each year. This shows how much companies value agility and efficiency. If you want to stay ahead, using a data orchestration platform will help you manage complex data workflows, improve operations, and make better decisions in real time.

When you look for the best data orchestration tools, you want options that fit your business needs and make your data workflows smooth and reliable. Let’s break down the top data pipeline tools for 2025, so you can see what makes each one stand out.

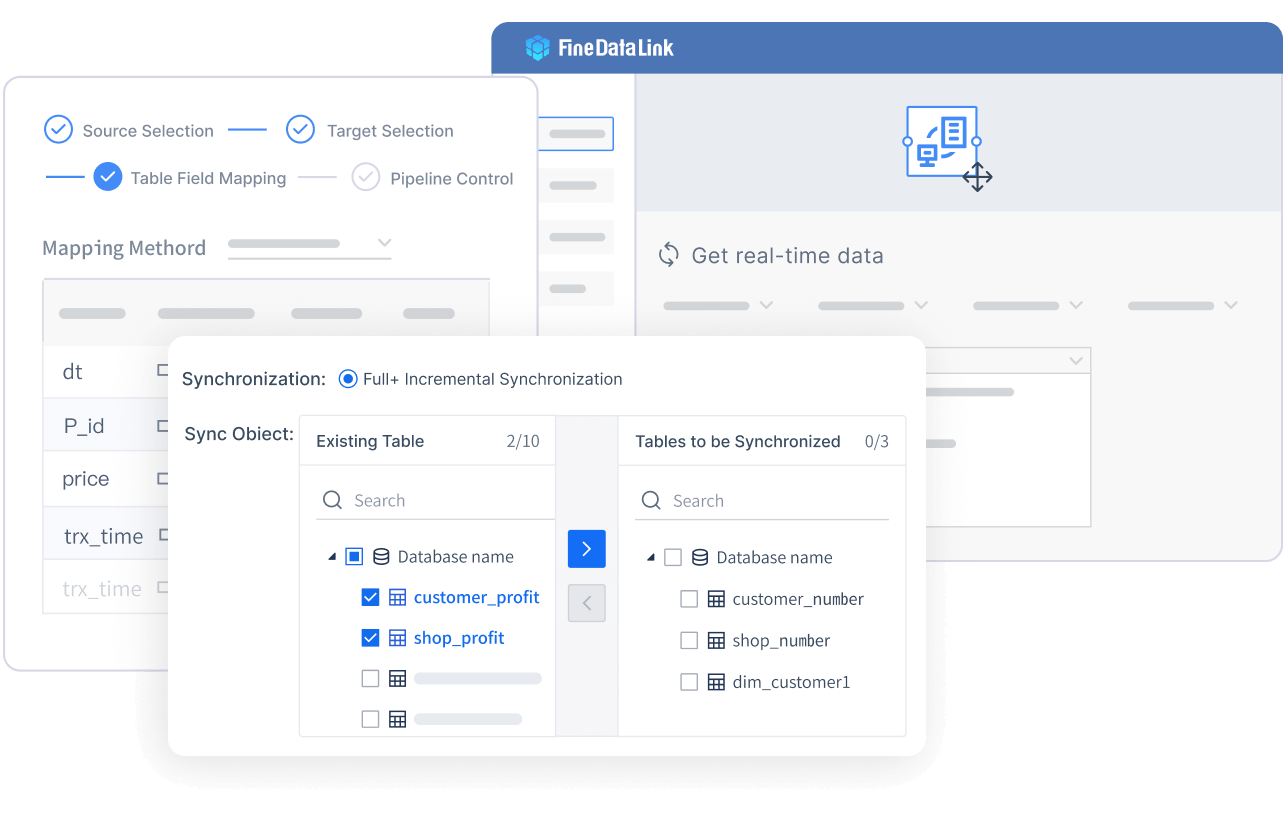

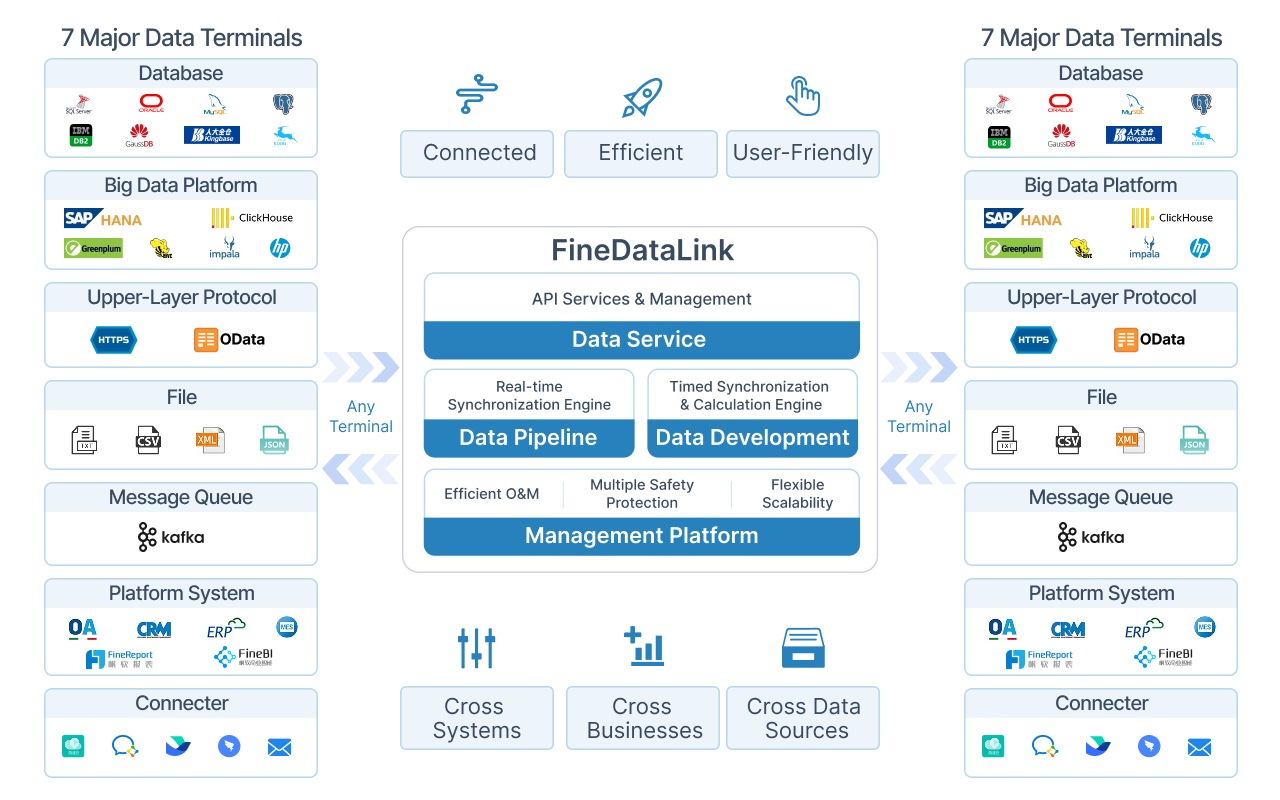

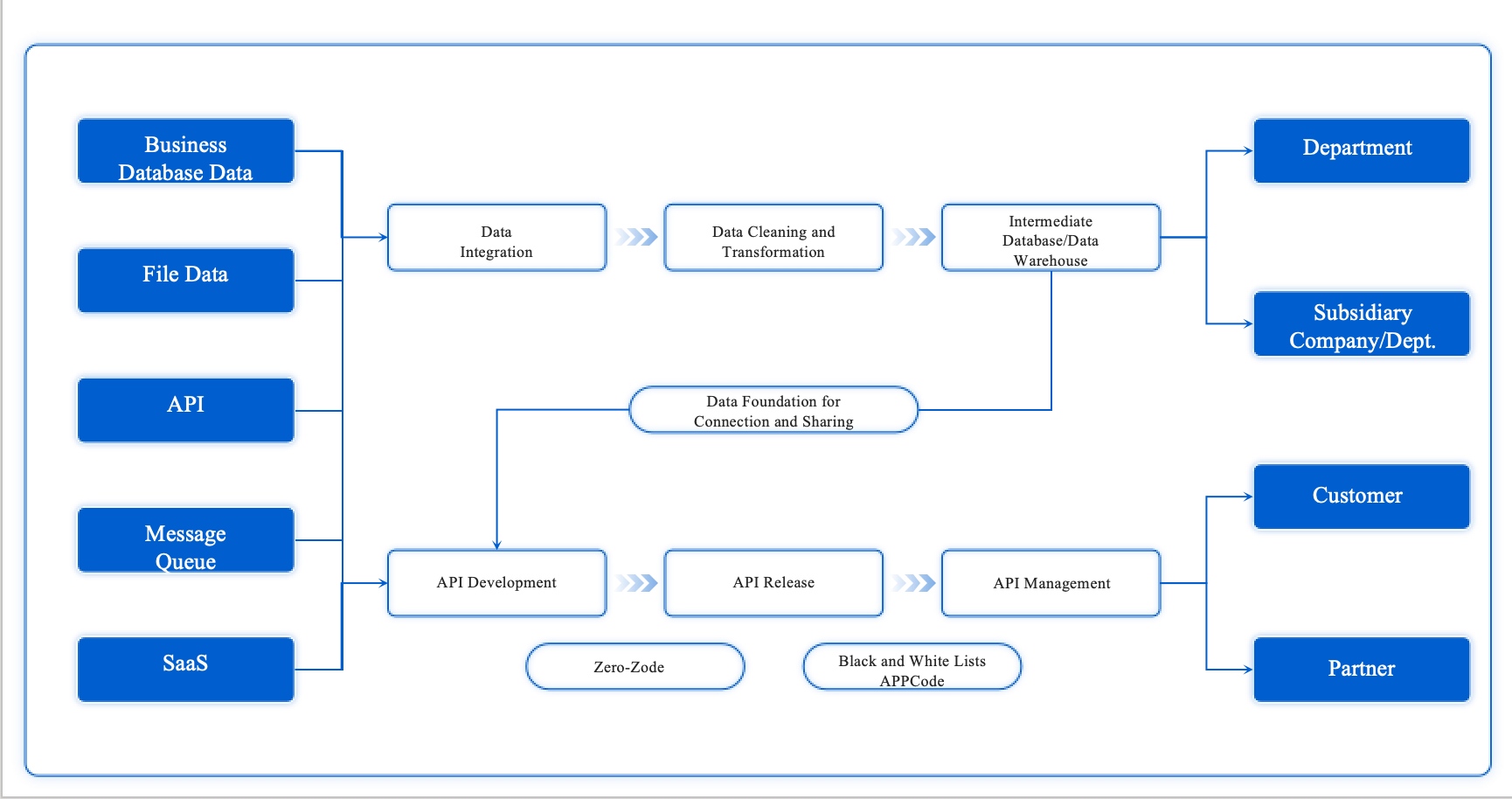

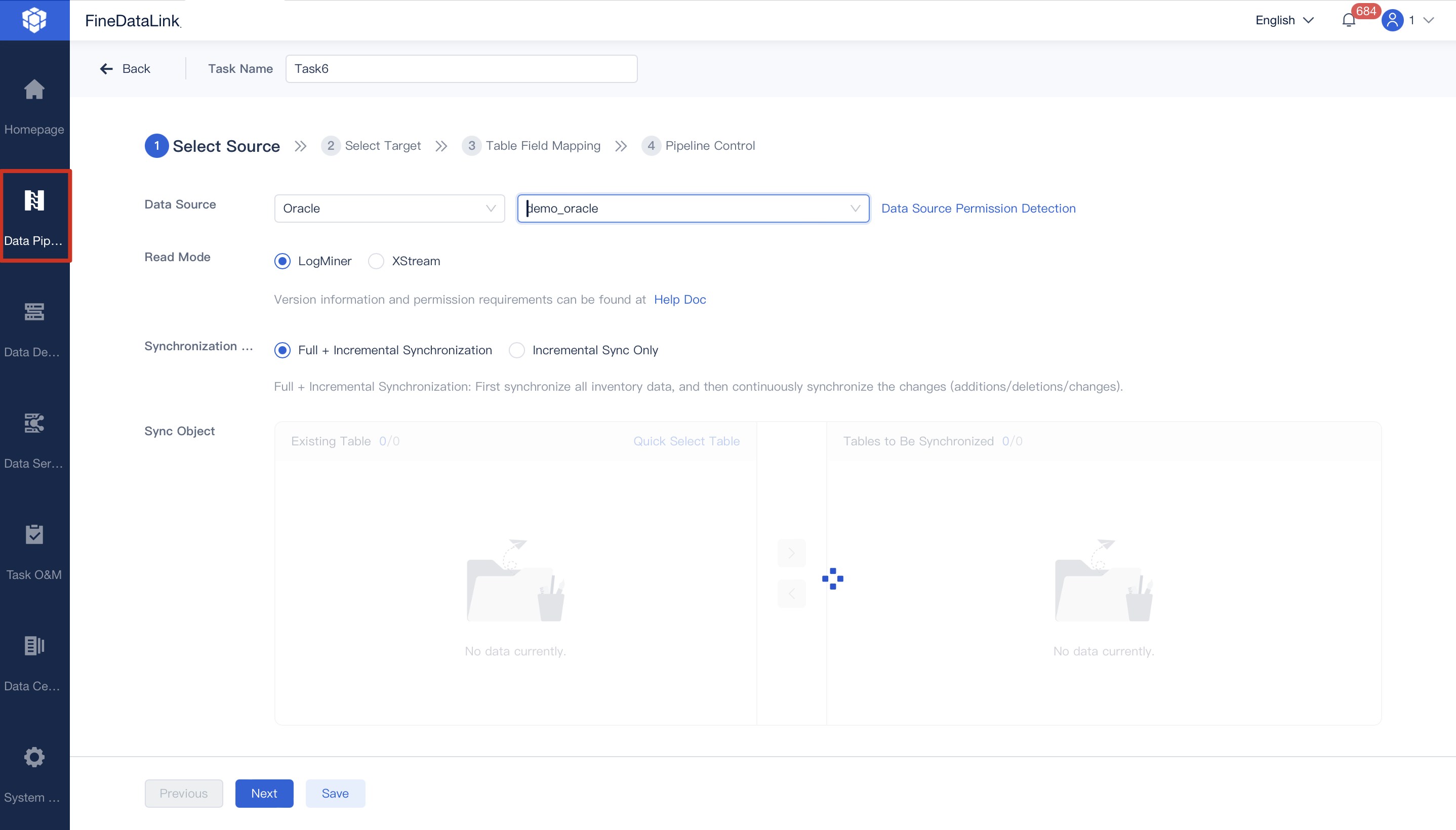

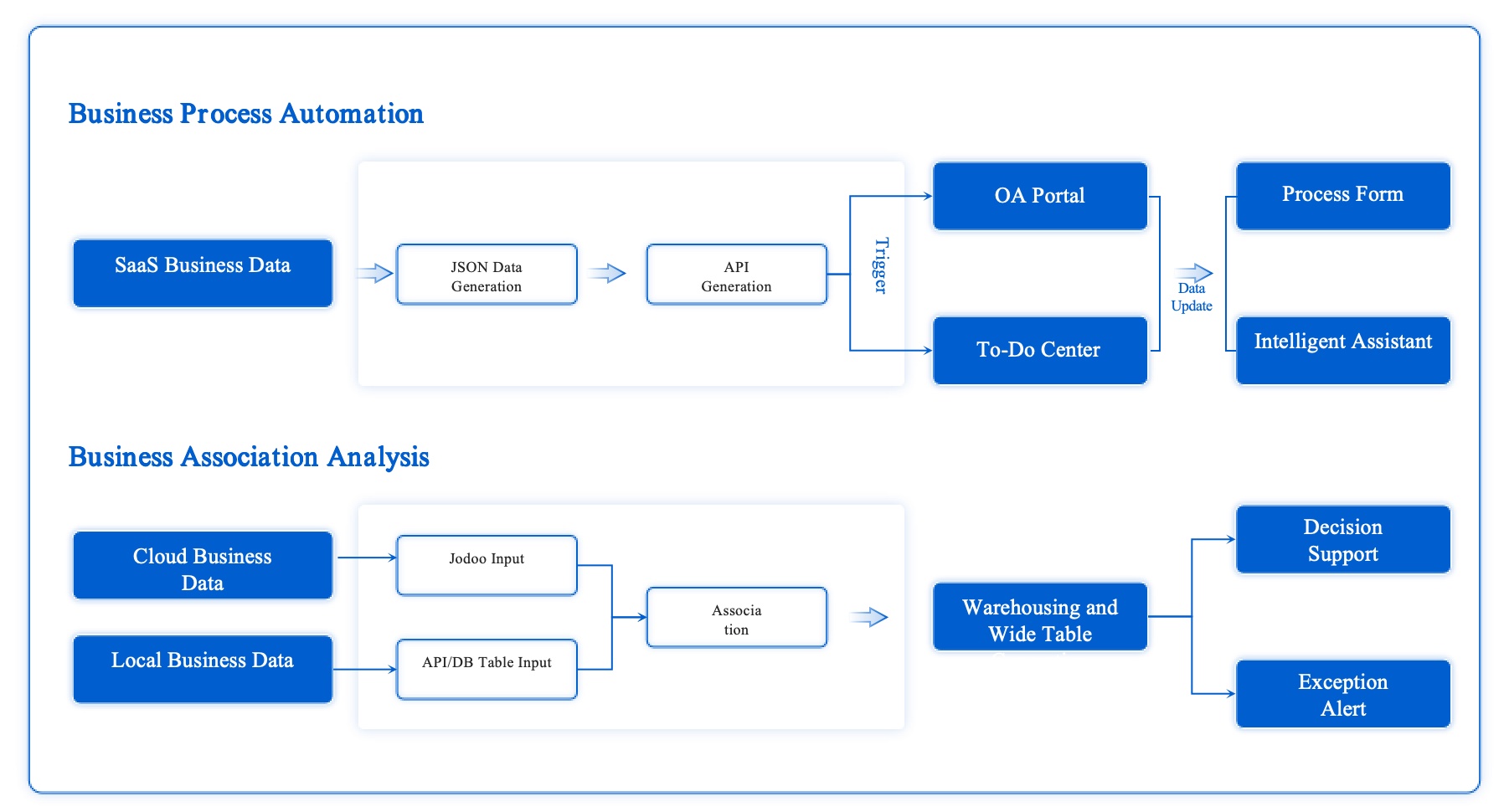

FineDataLink by FanRuan is a modern, scalable platform designed for real-time data integration and advanced ETL/ELT processes. You can collect data from many sources, including relational and non-relational databases, interfaces, and files. FineDataLink synchronizes data in real time, so you always have up-to-date information for your business intelligence needs. The platform uses a dual-core engine for both ETL and ELT, giving you flexibility to customize your data pipelines. You can set up and manage everything with a low-code interface, making it easy for anyone to build and monitor data workflows. FineDataLink also provides visual management tools and supports standardized, high-performance solutions for complex scenarios.

Website: https://www.fanruan.com/en/blog/data-warehouse-solutions

| Feature | Description |

|---|---|

| Multi-source data collection | Integrates various databases and files. |

| Non-intrusive real-time sync | Keeps data fresh and synchronized. |

| Efficient data development | Dual-core engine for ETL and ELT. |

Apache Airflow is one of the best data orchestration tools you’ll find. You get a powerful platform that lets you build, schedule, and monitor complex data pipelines. Airflow uses a Directed Acyclic Graph (DAG) structure, which means you can set up task dependencies without worrying about loops or confusion. Big companies like PayPal and Google trust Airflow because it scales easily and works with many systems. You’ll love the user-friendly interface, which helps you visualize and troubleshoot your data workflows. Airflow also supports automation, scripting, and parallel scaling, so you can handle large workloads with ease.

Website: https://airflow.apache.org/

Tip: If you need to automate data pipelines and want clear visualization, Airflow is a solid choice.

Prefect is another favorite among data orchestration tools. You get an open-source platform that makes it easy to define, schedule, and monitor your data pipelines. Prefect organizes your work into tasks and flows, so you can manage each step and set up task dependencies with just a few clicks. The platform offers simple retry logic, so failed tasks can try again automatically. You can monitor everything from the Prefect UI and get notifications if something goes wrong. This makes your data workflows more reliable and less stressful.

Website: https://www.prefect.io/

| Feature | Description |

|---|---|

| Orchestration Tool | Open-source, easy to define and monitor data pipelines. |

| Tasks and Flows | Organizes work into manageable units. |

| Retry Logic | Automatic retries for failed tasks. |

| Monitoring | Visual UI for tracking workflows. |

| Notifications | Alerts for errors and issues. |

Dagster stands out for its modular design and developer-friendly experience. You can break down your data pipelines into smaller pieces, making it easier to scale and manage your data workflows. Dagster uses data assets, pipelines, and resources to help you track lineage and organize your work. You get context-aware scheduling and event-based triggers, which optimize pipeline execution. Teams can work on separate modules at the same time, improving collaboration and productivity.

Website: https://dagster.io/

| Feature | Description |

|---|---|

| Data Assets | Track outputs and lineage. |

| Pipelines | Define data flow and processing steps. |

| Resources | Flexible execution environments. |

| Schedules and Sensors | Smart scheduling and triggers. |

| Partitions | Efficient batch computations. |

| Developer-Centric | Focus on testing and maintainability. |

Astronomer is a cloud-native solution built around managed Apache Airflow. You can deploy and scale your data pipelines across AWS, GCP, and Azure without hassle. Astronomer helps you develop complex workflows quickly using Python, so you can respond to changing data needs fast. The platform optimizes infrastructure, so you don’t need a big team of engineers to manage your data workflows. You get flexibility, scalability, and efficient resource allocation, making Astronomer a great choice for businesses with dynamic workloads.

Website: https://www.astronomer.io/

| Advantage | Description |

|---|---|

| Scalability and Flexibility | Deploy and scale workflows across major cloud platforms. |

| Improved Speed of Development | Rapid workflow creation and iteration. |

| Efficient Resource Allocation | Optimized infrastructure management. |

Domo is a comprehensive platform that combines data integration, visualization, and AI-driven analytics. You get more than 1,000 pre-built connectors, so integrating data from different sources is simple. Domo’s user-friendly interface lets you create self-service BI dashboards and automate actions with predictive and generative AI models. The cloud amplifier architecture means you can apply analytics and AI without moving your data. Domo also supports collaborative development, so your team can share insights and build data products together.

Website: https://www.domo.com/

| Key Differentiator | Description |

|---|---|

| Comprehensive Platform | Data integration, visualization, and AI analytics. |

| User-Friendly Interface | Easy self-service BI. |

| Extensive Data Connectors | 1,000+ connectors for integration. |

| Agentic AI | Automated actions with AI models. |

| Cloud Amplifier Architecture | Analytics without data movement. |

| Collaborative Development | Team sharing and development. |

Flyte is built for machine learning workflows and data pipeline orchestration. You can customize and extend workflows with infrastructure abstraction, making it easy to adapt to your needs. Flyte supports distributed training, data processing, and ML monitoring, so you can streamline model development. The platform enables collaboration by providing isolated environments for teams. You can serialize models and track lineage for reproducibility. Flyte also manages resources dynamically and offers failure recovery, so you don’t have to rerun successful tasks.

Website: https://flyte.org/

| Feature | Description |

|---|---|

| Customizable Workflows | Adapt and extend workflows easily. |

| Out-of-the-box Integrations | Supports ML training and monitoring. |

| Collaboration | Isolated environments for teams. |

| Reproducibility | Track and reproduce experiments. |

| Resource Management | Dynamic allocation and cost control. |

| Failure Recovery | Avoid rerunning successful tasks. |

Kedro helps you build reproducible and maintainable data pipelines. You get a project structure that makes it easy to organize complex workflows. Kedro’s data catalog lets you define storage and parsing, so managing data is straightforward. The platform visualizes pipeline nodes and their dependencies, making your workflow clear. Kedro enforces coding standards, which helps teams collaborate and transition smoothly from development to production.

Website: https://kedro.org/

| Feature | Description |

|---|---|

| Project Structure | Organizes complex workflows. |

| Data Catalog | Defines storage and parsing. |

| Pipeline Visualization | Shows dependencies and execution order. |

| Coding Standards | Promotes team collaboration. |

| Production-Ready | Smooth transition to production. |

Kubeflow is a top choice if you want scalable machine learning orchestration. It runs on Kubernetes, so you can schedule, scale, and manage ML workloads just like other Kubernetes jobs. Each node in your pipeline is a containerized component, and Kubeflow makes sure everything runs in the right order based on data dependencies.

Website: https://www.kubeflow.org/

Note: Kubeflow uses cloud-native technologies like Istio and Knative for advanced deployment. You get a user-friendly interface, even if you’re not a Kubernetes expert.

Metaflow is a Python library that makes managing complex data science workflows simple. You can move from developing models in Jupyter notebooks to running scalable production pipelines without learning new infrastructure tools. Metaflow gives you a clean Python API to handle scheduling, data movement, versioning, and scaling. It’s perfect for iterative and exploratory workflows, like machine learning model development. You can manage project progress, reproduce results, and share findings easily. The architecture is flexible and modular, so you can customize and integrate with other data pipeline tools.

Website: https://metaflow.org/

If you want to choose the best data orchestration tools for your business, consider how each platform handles data pipelines, task dependencies, and real-time data processing. The right data integration tools will help you automate your data workflows, improve data quality, and unlock new insights for your organization.

When you compare data orchestration tools, integration capabilities matter most. You want a platform that connects with your favorite databases, cloud services, and business apps. Some tools, like Azure Data Factory, offer built-in connectors for Azure services and SQL databases. SAP Data Intelligence works with both SAP and non-SAP sources, supporting hybrid and multi-cloud setups. Databricks integrates with Apache Spark, making collaborative data projects easier. FineDataLink stands out by supporting over 100 data sources and enabling automated data integration across systems. This flexibility helps you automate and streamline your data workflows without extra effort.

| Tool Name | Integration Capabilities |

|---|---|

| Azure Data Factory | Built-in connectors for various data sources, integrates with Azure services like Blob Storage and SQL Database. |

| SAP Data Intelligence | Integrates with SAP and non-SAP sources, supports hybrid and multi-cloud environments. |

| Databricks | Integrates with Apache Spark for high-performance data processing, supports collaborative data projects. |

| FineDataLink | Supports 100+ data sources, enables automated data integration, real-time data processing, and API connectivity. |

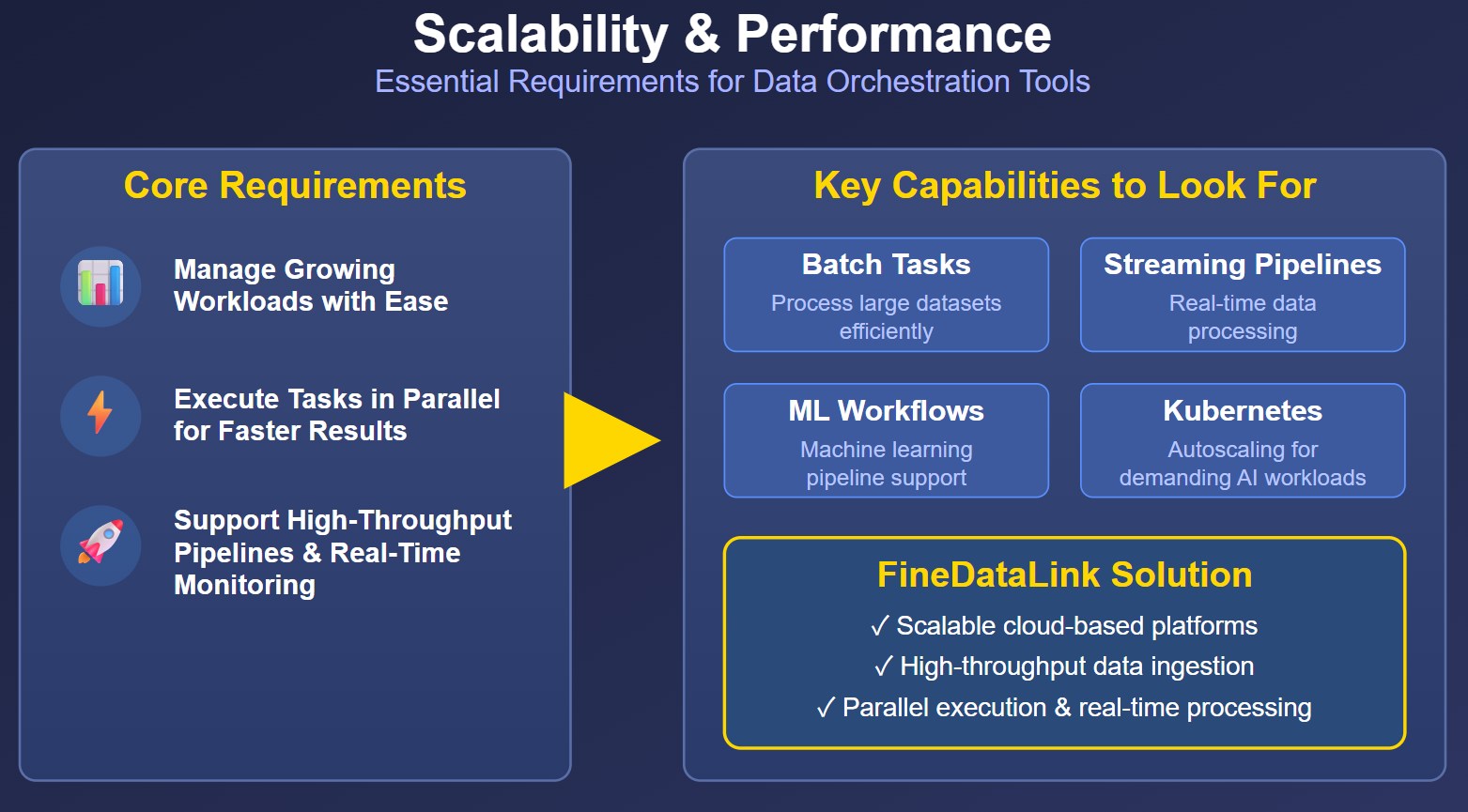

You need data orchestration tools that scale with your business. A good platform manages increased data and workloads without slowing down. Look for tools that support batch tasks, streaming pipelines, and machine learning workflows. Some orchestrators, like those using Kubernetes, offer autoscaling for demanding AI workloads. FineDataLink provides scalable cloud-based platforms and handles high-throughput data ingestion, parallel execution, and real-time data processing. This ensures your data workflows run smoothly as your needs grow.

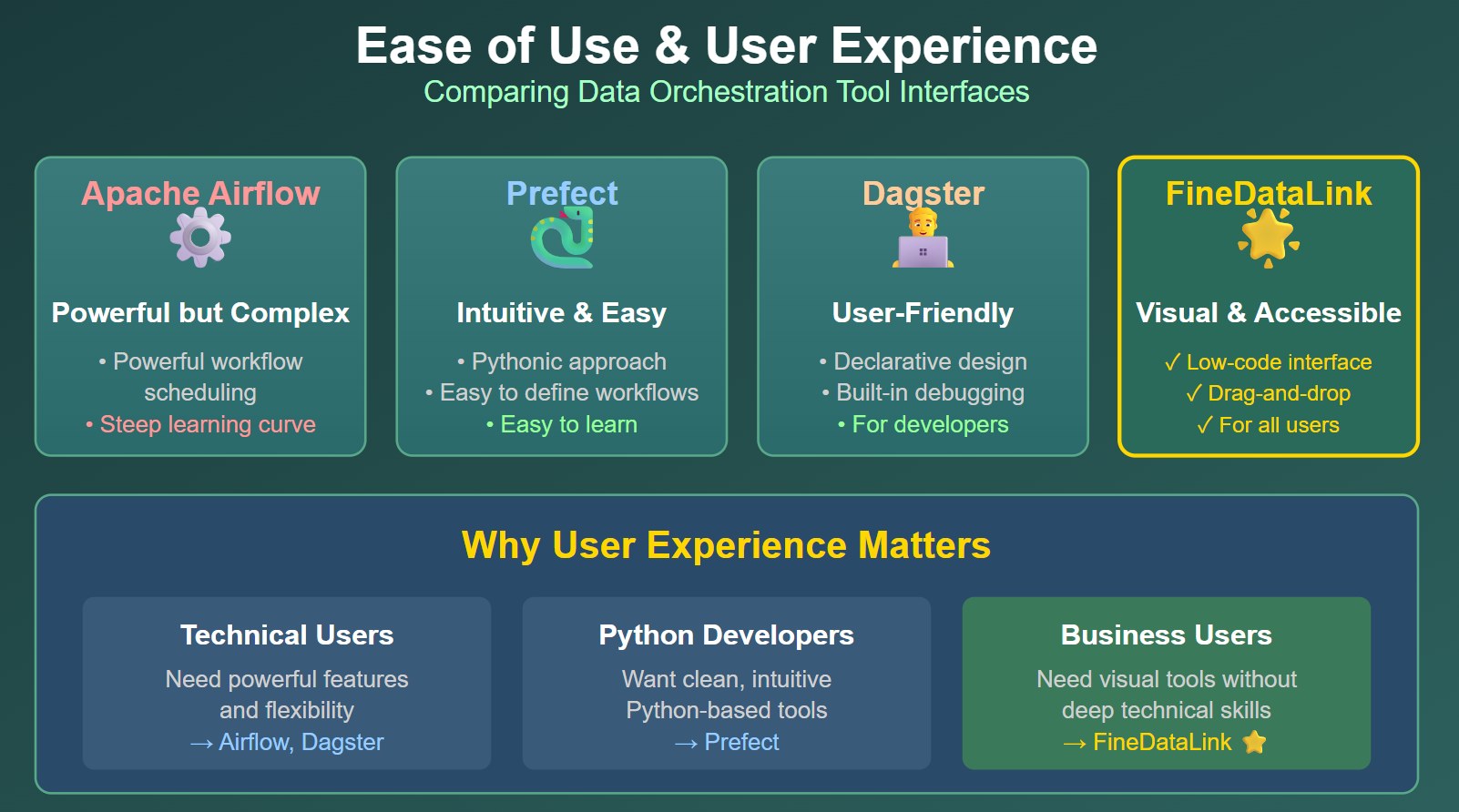

User experience can make or break your data orchestration journey. Apache Airflow gives you powerful workflow scheduling but has a steep learning curve. Prefect offers a more intuitive, Pythonic approach, making it easier to define and manage data workflows. Dagster reduces cognitive load with declarative workflow design and built-in debugging tools. FineDataLink uses a low-code interface, drag-and-drop features, and visual management, so you can automate and streamline data transformation without deep technical skills.

Real-time monitoring is essential for reliable data pipelines. Tools like New Relic, Grafana, and Elastic Observability offer deep diagnostics, customizable dashboards, and log aggregation. FineDataLink provides real-time monitoring for data workflows, pipeline health, and data transformation. You can track performance, detect issues early, and keep your data integration running smoothly.

| Tool & Vendor | Data Monitoring | Pipeline/Flow Observability | AI/ML-Driven Detection | Ease of Implementation |

|---|---|---|---|---|

| SYNQ | Yes | Yes | Yes | Easy |

| Acceldata | Yes | Yes | Yes | Moderate |

| FineDataLink | Yes | Yes | Yes | Easy |

Support and community help you solve problems and learn best practices. Airflow and Prefect have large, active communities. Dagster offers strong developer support. FineDataLink provides detailed documentation, step-by-step videos, and responsive customer service. You get help when you need it, making your data orchestration tools easier to adopt and scale.

Tip: Choose a tool with strong support and an active community. This makes troubleshooting and learning much easier.

Before you pick the best data pipeline tools, take a close look at your current data landscape. Start by thinking about the types of data you use, where it comes from, and how much you expect to handle in the future. Make a list of your sources—databases, APIs, files, and cloud services. Ask yourself if you need real-time data or if batch processing works for your business. Here’s a quick checklist to guide you:

FineDataLink makes this step easier with its low-code setup and support for over 100 data sources, so you can connect everything without extra hassle.

You want your data orchestration tools to move data smoothly between all your systems. The best data pipeline tools let you connect databases, SaaS apps, and BI platforms without headaches. Flexibility is key, especially if you use both cloud and on-premise systems. FineDataLink stands out here, offering seamless data integration and API connectivity, which means your data workflows stay efficient and unified.

Tip: Choose a platform that centralizes control and supports hybrid environments for maximum flexibility.

Your business will grow, and your data needs will change. The best data pipeline tools should handle more data without slowing down. Real-time data pipelines are now essential for things like fraud detection, predictive maintenance, and instant personalization. FineDataLink supports real-time data synchronization with low latency, so your data workflows stay fast and responsive as your business evolves.

No one wants to spend weeks learning a new tool. Look for data orchestration tools with a user-friendly interface and clear setup steps. FineDataLink’s low-code, drag-and-drop design helps you build and manage data workflows quickly—even if you’re not a coding expert. This means you can focus on insights, not troubleshooting.

Great support and resources make a huge difference. When you have access to detailed documentation, helpful videos, and an active community, you solve problems faster and learn best practices. FineDataLink offers step-by-step guides and responsive support, so you always have help when you need it.

Remember: The right support and community can turn a good tool into the best data pipeline tool for your team.

When you start with a new data orchestration platform, you might run into a few common problems. Sometimes, data quality and consistency issues pop up. You may find errors or conflicting definitions in your source data. Data silos can also slow you down, making it hard to share and integrate information across teams. Managing lots of automated jobs can get complicated fast. Security risks are always a concern, too. Here’s a quick look at these challenges and how you can tackle them:

| Challenge | Description | Solution |

|---|---|---|

| Data quality and consistency | Errors or conflicting definitions in source data | Cleanse and validate data, set clear business rules |

| Breaking down data silos | Hard to share and integrate data across teams | Treat as a change project, set up governance, show quick wins |

| Operational complexity & monitoring | Too many automated jobs can get confusing | Use monitoring tools, document workflows, start small |

| Security and compliance risks | Central systems can be targets for attacks | Build in security, use strong authentication, audit access regularly |

FineDataLink by FanRuan helps you avoid these pitfalls. Its visual interface and real-time monitoring make management of data workflows much easier. You can also automate data cleansing and validation steps to keep your data integration smooth.

You need to keep your data safe and follow the rules. Leading platforms use end-to-end encryption, strong access controls, and follow standards like GDPR and HIPAA. FineDataLink supports these features, so you can trust your data is secure. Here are some common security features:

FineDataLink also makes it easy to set up governance and audit trails, so you always know who accessed your data and when.

Planning your resources helps you avoid surprises. You need to think about CPU, memory, storage, and network needs. Here’s a simple table to help you plan:

| Resource Type | Considerations |

|---|---|

| CPU | Match to your workload |

| Memory | Size for your data processing |

| Storage | Make sure you have enough for retention |

| Network | Optimize for fast and efficient data transfer |

FineDataLink’s low-code platform helps you optimize resources. You can start small and scale up as your data integration needs grow.

Change can be tough. Your team might need time to adjust to new tools and processes. Training is key. FineDataLink offers step-by-step guides and a user-friendly interface, so your team can learn quickly. You can also show early wins to build support and keep everyone motivated.

Tip: Start with a pilot project. Get feedback from your team. Use those lessons to roll out data workflows across your business.

Let’s look at how BOE, a global leader in IoT and semiconductor displays, transformed its business with FineDataLink by FanRuan. BOE faced a big challenge. Its data was scattered across many systems. Different teams used different metrics, which made it hard to get a clear picture. You can imagine how confusing that gets when you want to make fast decisions.

BOE decided to use FineDataLink for data integration. The platform helped BOE pull data from all its sources into one place. With FineDataLink, BOE built a unified data warehouse and set up clear rules for metrics. The company also created dashboards to track key performance indicators. This move made data workflows much smoother.

Here’s what happened next:

BOE’s story shows how the right data integration tool can help you break down silos and boost your business intelligence.

You’re not alone if you want to improve your data workflows. Many companies in manufacturing, retail, and finance have seen big results with modern data orchestration tools.

These examples show that when you choose the right platform, you can make your data work for you. You get faster insights, better decisions, and a stronger business.

You have plenty of choices when it comes to data orchestration tools. Pick a platform that matches your business goals and technical needs. Make sure it can grow with you. Try out demos or free trials, like FineDataLink, to see what fits best. If you want more help, talk to experts or read customer stories. Good data integration will help you unlock new insights and keep your workflows running smoothly.

Ready to take the next step? Explore your options and see how the right tool can transform your data journey.

The Author

Howard

Data Management Engineer & Data Research Expert at FanRuan

Related Articles

9 Best No Code Integration Platform Tools for 2026: Which One Fits Your Workflow Best?

Choosing the right no code integration platform can make the difference between a smooth, scalable workflow and a patchwork of disconnected apps.

Saber Chen

Apr 27, 2026

ETL Tools List for 2026: 15 Best Platforms Ranked by Connectors, Cost, and Maintenance

An ETL tool is a platform that extracts data from multiple sources, transforms it into a usable format, and loads it into a warehouse, lake, application, or operational system for analytics and business workflows.

Lewis Chou

Apr 26, 2026

10 Best Enterprise ETL Tools for Data Integration

Compare the 10 best enterprise ETL tools for data integration in 2025 to streamline workflows, boost analytics, and support scalable business growth.

Howard

Oct 02, 2025