An engineering KPI dashboard should help leaders make better decisions, not just collect more data. If you are an engineering manager, operations director, PMO lead, or CTO, the real challenge is rarely a lack of metrics. The problem is choosing measures that actually guide prioritization, expose risk early, and improve delivery without driving the wrong behavior.

Too many teams build dashboards around what is easiest to count: tickets closed, commits made, hours logged, or story points completed. Those numbers may look busy, but they do not reliably tell you whether engineering is delivering predictable outcomes, maintaining quality, protecting reliability, or supporting business goals.

The right dashboard depends on team type, delivery model, and stakeholder expectations. A product engineering team should not be measured like a platform team. A project-based engineering function needs different visibility than an architecture review group. When all teams are forced into the same dashboard, reporting gets distorted and decision-making gets weaker.

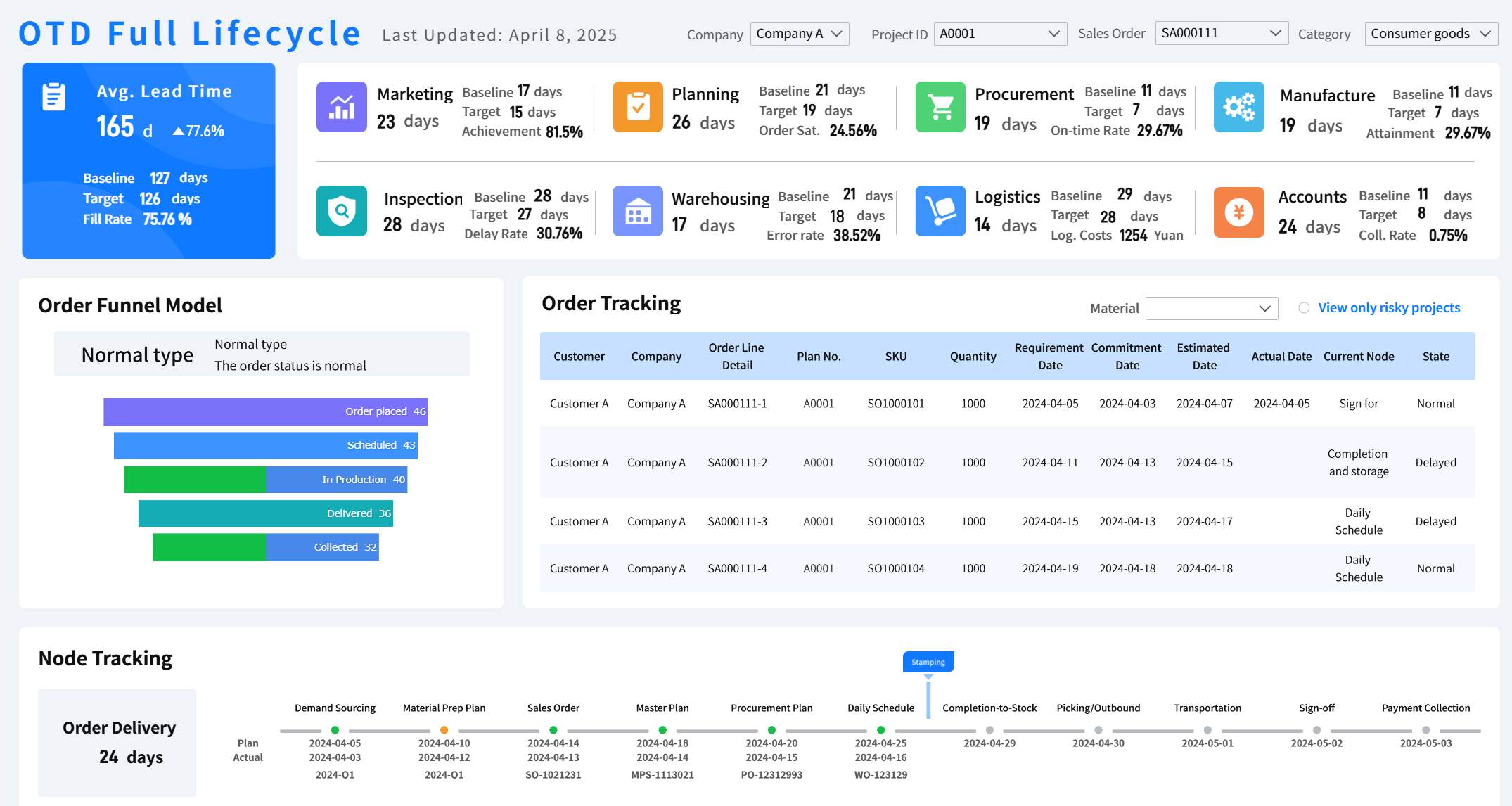

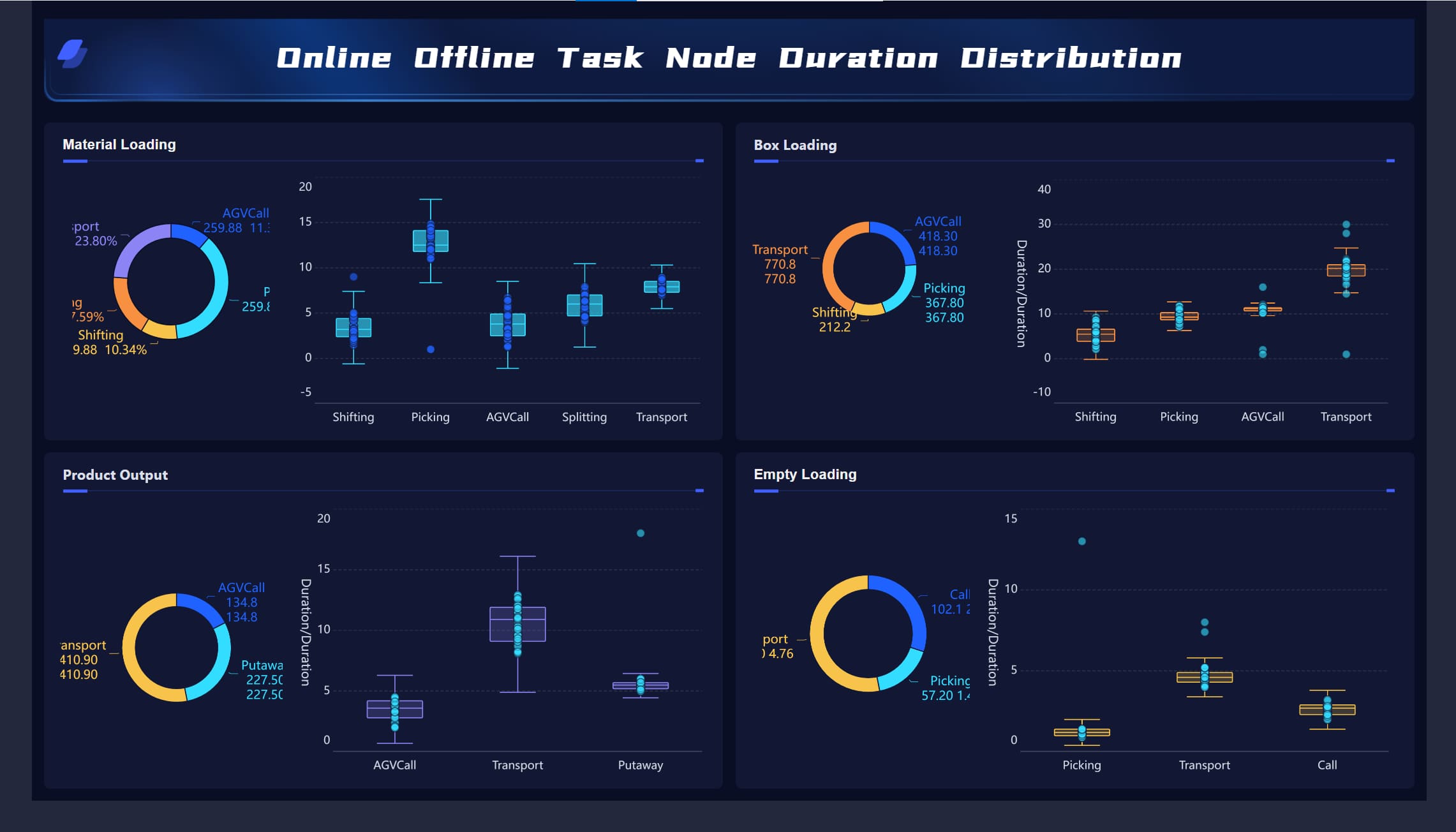

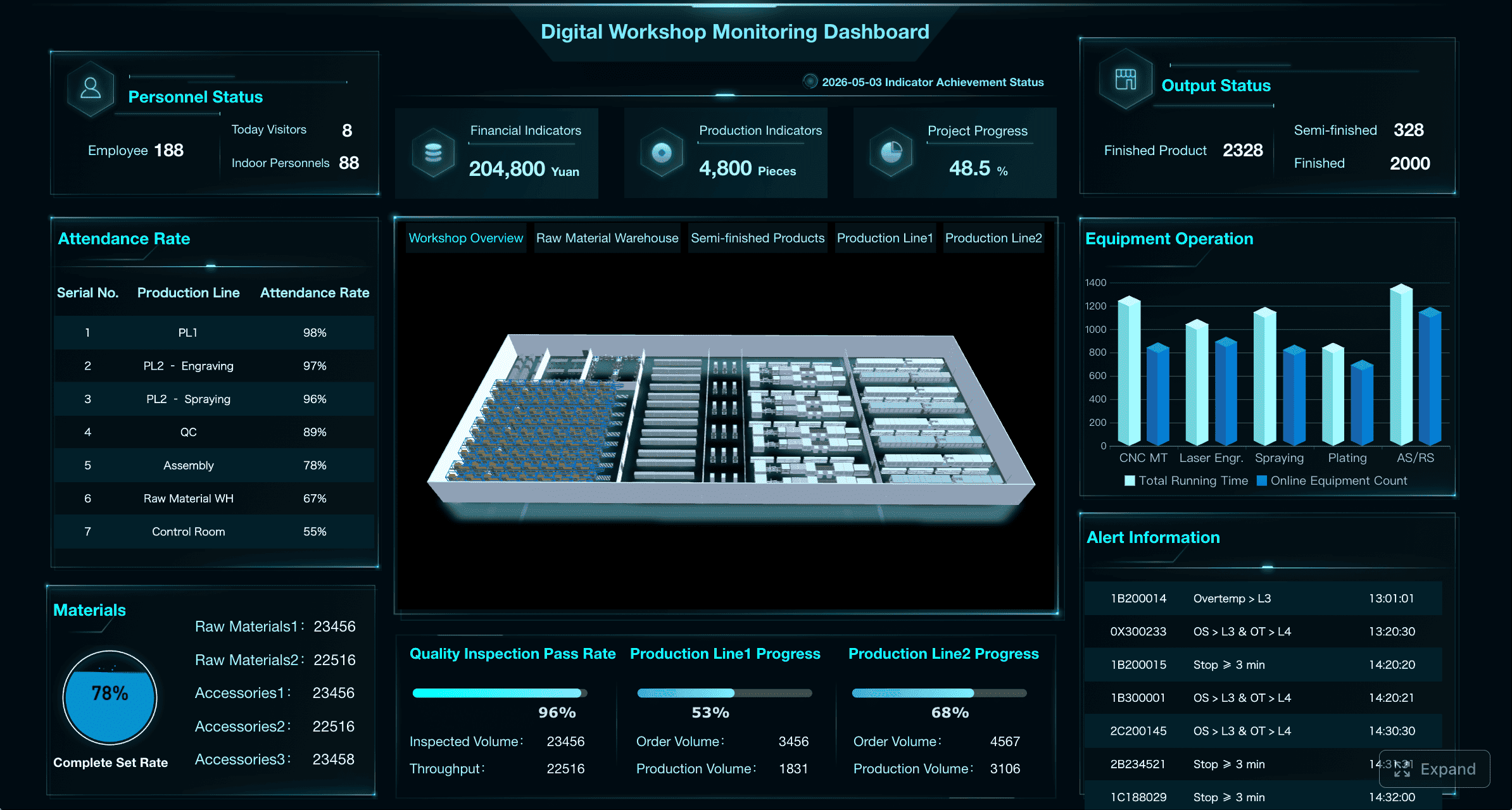

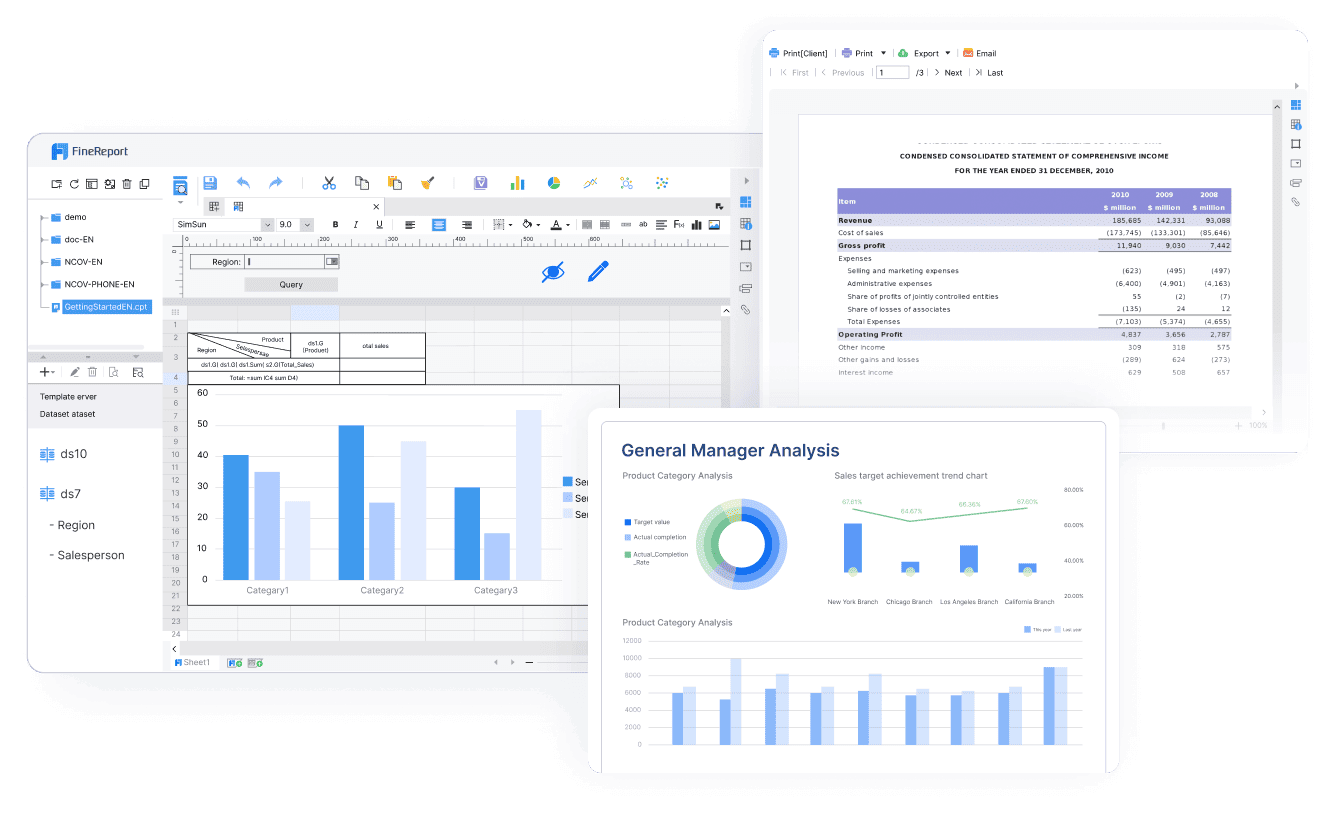

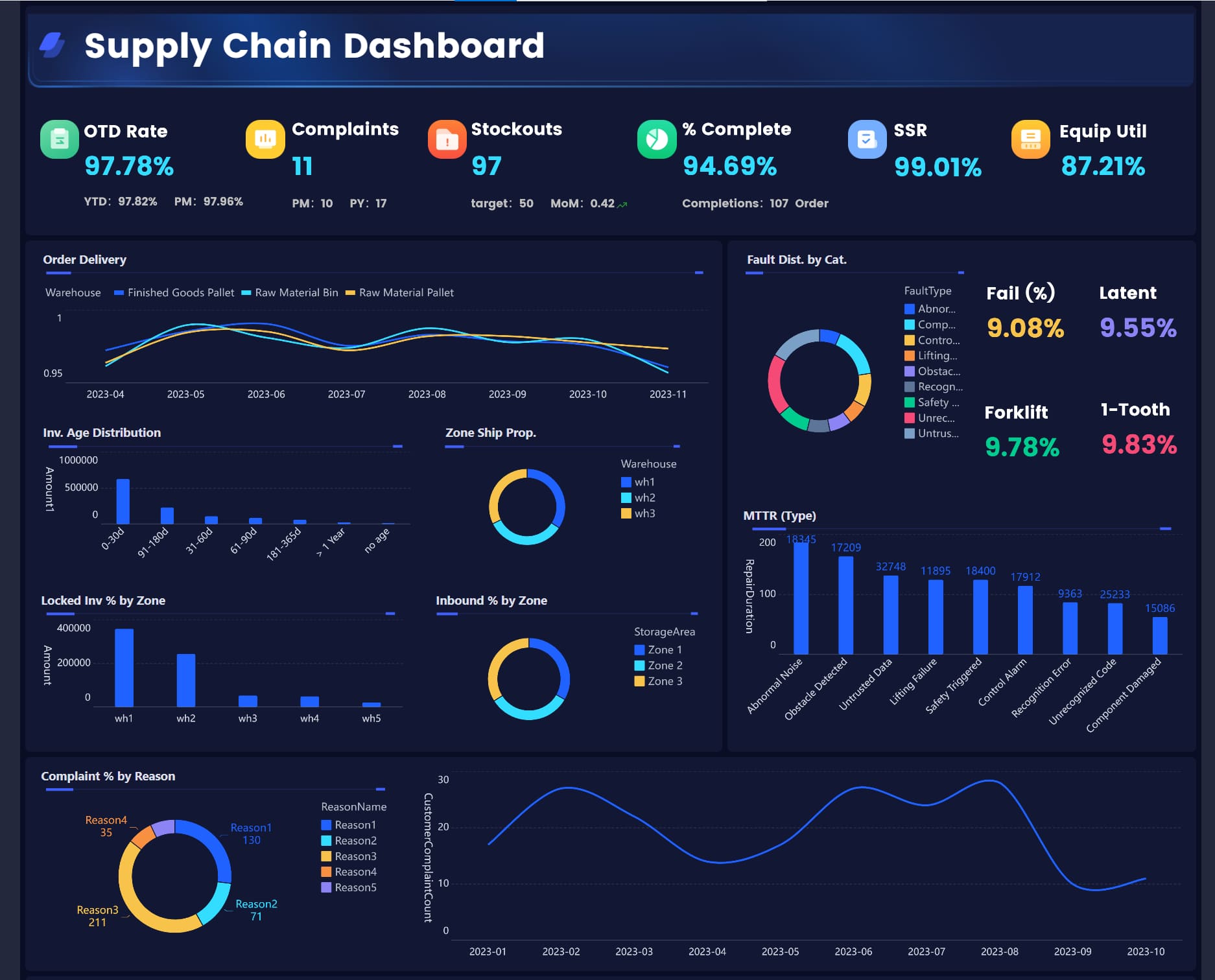

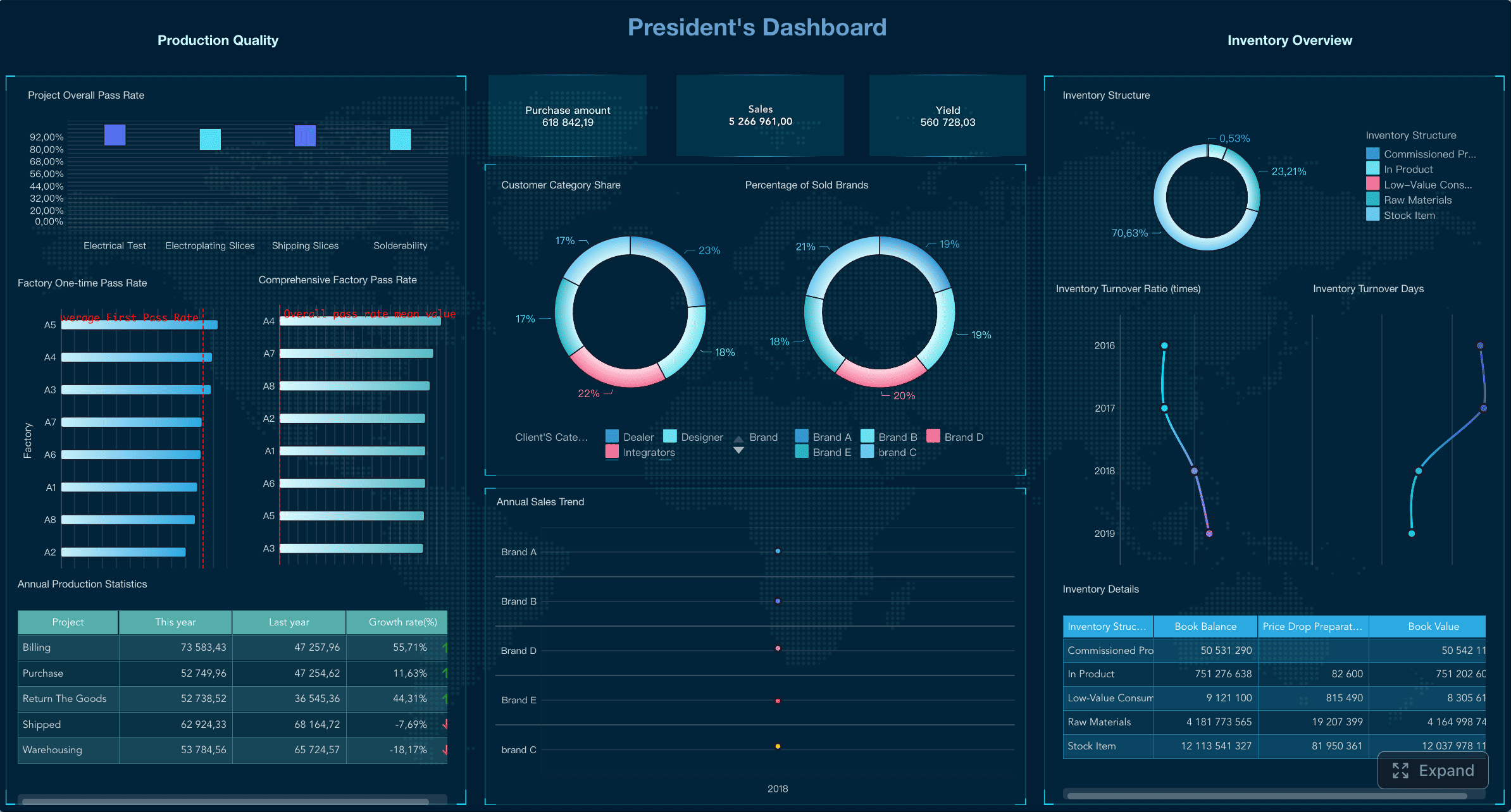

All dashboards in this article are created by FineReport

A good engineering KPI dashboard gives teams a shared operating view. It turns scattered project data, quality signals, and operational performance into a small number of metrics that support action. At its best, it helps leaders answer four questions quickly:

That is the business value of an engineering KPI dashboard: faster decisions, earlier intervention, and better alignment between engineering work and enterprise outcomes.

Not every number belongs on a KPI dashboard.

KPIs are measures tied to a defined objective and a recurring decision. They should influence prioritization, resource allocation, escalation, or process improvement.

Activity metrics track work volume, such as tickets created, pull requests opened, or meeting counts. These can support diagnosis, but they are rarely strategic on their own.

Vanity metrics look impressive but lack operational meaning. Examples include raw deployment count without context, total lines of code written, or cumulative task totals that always trend upward.

One-off reports answer a temporary question, such as a postmortem analysis or special audit request. Useful, yes. Core dashboard material, usually no.

A practical rule: if a metric does not have a clear owner, decision, and review cadence, it probably does not belong on your main engineering KPI dashboard.

Metrics only make sense in context. Teams work under different constraints:

A dashboard should reflect the mission of the team, the risks it manages, and the decisions stakeholders need to make. Otherwise, teams either ignore the dashboard or game the numbers.

The most effective engineering KPI dashboard starts with operating reality, not a generic template. Choose KPIs by working backward from mission, decisions, and accountability.

First define what the team exists to accomplish. Then identify who depends on that team and what constraints shape performance.

Ask:

For example, a platform team serving internal developers may need service-level metrics and internal customer sentiment. A project engineering team may need cost variance, milestone health, and scope change trends.

Every KPI on the dashboard should answer three governance questions:

This prevents dashboard clutter and makes the reporting system operationally useful.

One of the most common mistakes in engineering measurement is overweighting output. High throughput with rising defects, unstable releases, or poor adoption is not high performance. Strong dashboards balance four lenses:

Below is a practical KPI set for a modern engineering KPI dashboard. These are not all required for every team, but they represent the core categories that matter most.

Product engineering teams should focus on predictable delivery and product quality, while staying linked to user and business outcomes.

Useful KPIs include:

This mix helps product and engineering leaders see whether roadmap commitments are realistic and whether delivered features are stable enough to create value.

Platform and infrastructure teams should be measured on reliability, operational resilience, and internal customer enablement.

Useful KPIs include:

For these teams, a dashboard overloaded with feature counts misses the point. Reliability and usability of internal services matter more than visible output volume.

Project-based engineering teams need strong control over time, cost, scope, and risk.

Useful KPIs include:

This set helps PMOs and engineering leads spot delivery drift before it becomes a contractual, financial, or client issue.

Architecture, design assurance, security review, and specialized engineering teams often operate through review cycles, approvals, standards, and advisory outputs.

Useful KPIs include:

For these teams, dashboard design should emphasize service responsiveness and quality of technical governance rather than raw production output.

A trustworthy engineering KPI dashboard should be structured around categories that map to business decisions. This makes the dashboard easier to scan and easier to govern.

Delivery flow metrics show how work moves through the system.

Common examples:

These metrics reveal whether delivery is predictable and whether bottlenecks are structural or temporary.

Quality metrics show whether speed is coming at the cost of defects and rework.

Common examples:

Quality KPIs are essential because delivery volume without defect control creates hidden cost downstream.

Reliability metrics matter for any team supporting production systems, services, or internal platforms.

Common examples:

These metrics help operations leaders monitor resilience and understand whether engineering changes are improving or destabilizing the environment.

Project performance metrics are critical where commitments, budgets, or external timelines must be tightly controlled.

Common examples:

These are especially valuable for capital projects, client delivery functions, regulated programs, and engineering PMOs.

Team effectiveness metrics help leaders see whether process friction is slowing execution.

Common examples:

These should be interpreted carefully. They are best used to improve systems, not pressure individuals.

Outcome metrics connect engineering work to broader business value.

Common examples:

This is where many engineering dashboards fall short. Enterprise decision-makers want more than delivery data. They want to understand what engineering progress means for the business.

Different audiences need different dashboard views. A single engineering KPI dashboard can support multiple roles, but the views should be focused.

Executives need pattern recognition, forecast confidence, and exception visibility. Delivery managers need execution detail. Team leads need immediate operational signals. Trying to satisfy all three with one crowded view leads to dashboard fatigue.

A better approach is to create role-based views from one governed metric model.

A leadership dashboard should emphasize trends and risks. An operational dashboard should emphasize queue health, blockers, and threshold breaches.

Lagging indicators show what already happened. Leading indicators help prevent the next problem.

Examples:

The best engineering KPI dashboard includes both.

Leadership views should not drown executives in detail. Focus on:

This supports portfolio steering and investment decisions.

For delivery reviews, the dashboard should answer whether work is on track and what is likely to slip.

Use metrics such as:

This is the control room for project and program leaders.

For quality and performance reviews, focus on operational outcomes and corrective action.

Use metrics such as:

This helps engineering and operations teams verify that quality interventions are working.

A dashboard only works if people trust it, understand it, and use it in recurring reviews. Adoption depends on clarity and relevance.

Most teams do not need dozens of tabs. Start with five views:

This structure makes the engineering KPI dashboard easier to navigate and keeps discussions focused.

Metrics without thresholds create ambiguity. Teams need to know what good, acceptable, and risky look like.

For each KPI, define:

This is especially important for enterprise environments where multiple teams report upward.

Metric governance is not optional. If one team defines lead time from ticket creation and another defines it from development start, comparison becomes meaningless.

Document:

This is one of the highest-leverage practices in dashboard design.

A dashboard should evolve with the team. New delivery models, reorganizations, product shifts, or platform changes may require KPI redesign.

Do not treat the first version as permanent.

The most common design failures are predictable:

If the dashboard does not make the next decision easier, it is too complicated.

Use this checklist before launch:

The value of an engineering KPI dashboard declines when old metrics remain in place long after they stop influencing decisions. Ongoing governance is what keeps reporting relevant.

At least quarterly, review whether each KPI still matters. Remove or replace metrics that no longer support planning, prioritization, or intervention.

Every KPI creates behavioral incentives. If you optimize only for speed, quality may drop. If you optimize only for utilization, teams may hide slack needed for innovation or recovery.

Strong engineering leaders use dashboards to inform discussion, not force simplistic conclusions.

Numbers rarely explain themselves. Add short review commentary on:

This prevents overreaction and improves executive trust.

If team charters change, delivery tooling changes, or company priorities shift, your dashboard should change too. The engineering KPI dashboard must reflect the current operating model, not last year’s org chart.

Designing an engineering KPI dashboard that fits multiple team types is not conceptually hard, but building it manually is complex. You need reliable data integration, consistent KPI definitions, role-based views, threshold logic, trend visualizations, and governed refresh cycles. That becomes difficult fast, especially in enterprise environments with Jira, Git, CI/CD, service monitoring, spreadsheets, and project systems all feeding different versions of the truth.

This is where FineReport becomes the practical solution.

With FineReport, engineering leaders can use ready-made templates and automate this entire workflow. Instead of stitching together fragile reports manually, teams can centralize KPI logic, standardize dashboards across functions, and create tailored views for executives, delivery managers, quality leaders, and operations teams.

FineReport helps you:

FineReport helps you:

For enterprise teams, the advantage is not just better visualization. It is better operational control. When the dashboard is trusted, current, and aligned to decisions, leaders can intervene earlier, communicate more clearly, and improve performance without drowning the organization in reporting overhead.

If your goal is to create an engineering KPI dashboard that teams actually use, the path is clear: define the right metrics by team type, govern them tightly, and avoid building the reporting stack from scratch. FineReport is the enabler that turns that framework into a scalable, enterprise-ready dashboard system.

A useful engineering KPI dashboard should focus on a small set of metrics across delivery, quality, reliability, and business impact. The exact mix should match the team’s mission, risks, and stakeholder decisions rather than using one standard template for every team.

Start with what the team is accountable for, who reviews its performance, and which decisions the dashboard needs to support. Then select metrics that are measurable, owned by someone, and reviewed on a clear cadence.

Metrics like lead time, cycle time, escaped defects, change failure rate, uptime, and business outcome measures usually provide stronger insight than raw activity counts. They help leaders see whether work is predictable, high quality, and creating value.

Most teams should keep the main dashboard limited to the few KPIs that directly support recurring decisions. If there are too many metrics, the dashboard becomes harder to read and easier to ignore.

Teams often track what is easiest to count instead of what drives action. That leads to vanity metrics, distorted behavior, and dashboards that look busy but do not improve delivery, quality, or reliability.

The Author

Yida Yin

FanRuan Industry Solutions Expert

Related Articles

Executive Summary Dashboard for Enterprise Leaders: 9 Steps to Design KPIs, Layout, and Governance

Learn 9 steps to design an executive summary dashboard for enterprise leaders. Define KPIs, layout, and governance for better strategic decision-making.

Lewis Chou

May 04, 2026

Executive Dashboard Template: What Enterprise Leaders Should Include Before They Build

Learn what to include in an executive dashboard template for better decision-making. Focus on KPIs, risk signals, and strategic alignment for leadership teams.

Lewis Chou

May 04, 2026

How to Build a Teams Call Queue Dashboard: Turn Raw Queue Data Into Actionable Insights

Learn how to transform raw Teams call queue data into a dashboard for real-time monitoring, trend analysis, and proactive service level management.

Lewis Chou

May 04, 2026