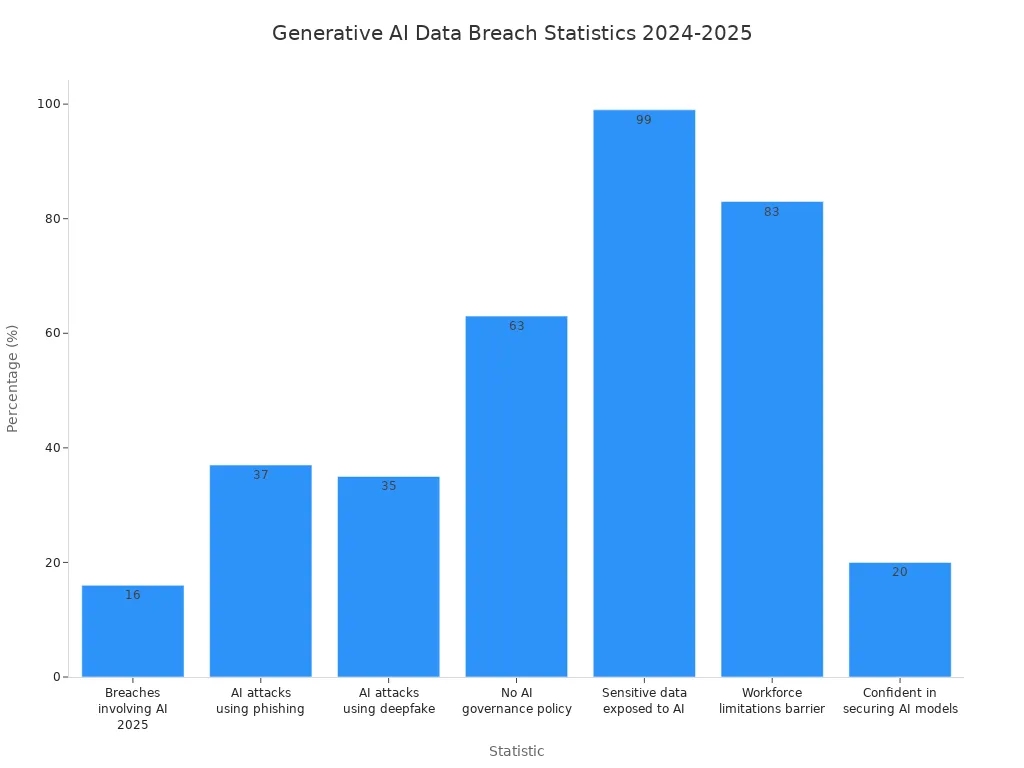

Generative AI data security protects your sensitive information and systems when you use AI to create new content. In 2025, you face growing risks from cyber security threats and stricter regulations. Attacks using deepfakes and phishing now target AI systems more than ever. Only 20% of organizations feel confident in securing these models, and 99% have exposed sensitive data to AI tools.

| Statistic | Value |

|---|---|

| Percentage of breaches involving AI in 2025 | 16% |

| Percentage of AI attacks using phishing | 37% |

| Percentage of AI attacks using deepfake | 35% |

| Organizations without AI governance policy | 63% |

| Organizations with exposed sensitive data to AI tools | 99% |

| Executives citing workforce limitations as a barrier | 83% |

| Organizations confident in securing generative AI models | 20% |

| High-risk AI tools among unverified OAuth apps | 1 in 4 |

You need robust data security for business intelligence platforms like FanRuan and products such as FineChatBI to protect your business and stay compliant.

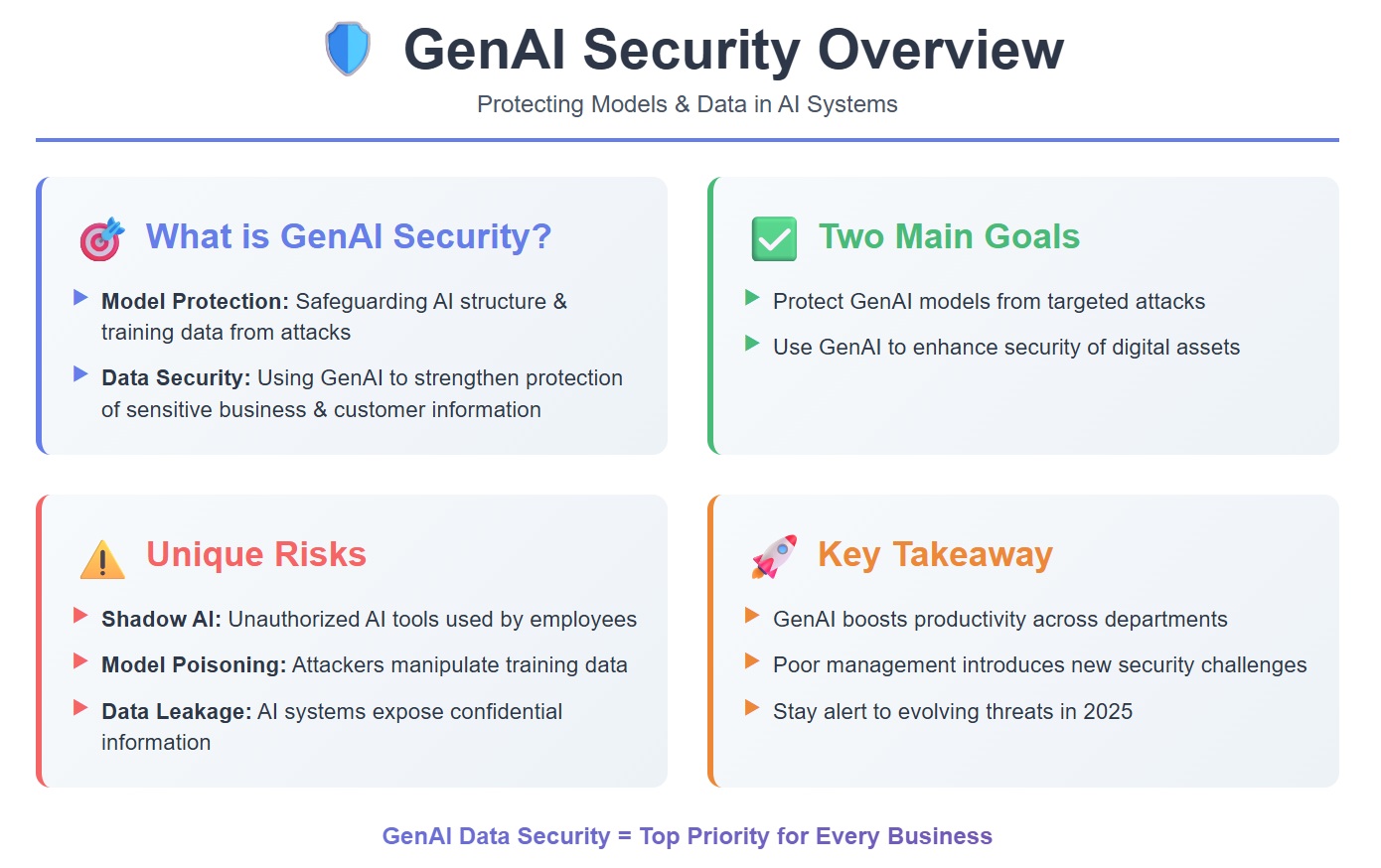

You need to understand genai security before you can protect your organization. Genai security covers the protection of both the models and the data that ai systems use and generate. When you use generative ai data security, you focus on two main goals:

Genai security brings unique risks. Shadow AI can appear when employees use unauthorized ai systems. Model poisoning happens when attackers manipulate the data that trains your ai systems. Data leakage can occur if your ai systems expose confidential information. These risks make generative ai data security a top priority for every business.

You see genai security as a way to boost productivity across departments. However, if you do not manage it properly, you introduce new security challenges. You must stay alert to the evolving threats that target ai systems in 2025.

Tip: Always monitor your ai systems for unusual activity. Early detection helps you stop threats before they cause harm.

You interact with many types of data when you use ai systems for business. Genai security must cover all these data types to keep your organization safe. The table below shows common data types processed and generated by ai systems in enterprise environments:

| Type of Data | Description |

|---|---|

| Marketing Content | Generating content for sales and marketing purposes. |

| Product Design | Assisting in the design of new products. |

| Synthetic Data | Creating realistic synthetic data for training ai models. |

| Rapid Prototyping and Innovation | Supporting quick development and innovation processes in enterprises. |

You may also handle customer data, financial records, and internal communications with ai systems. Genai security must protect all these data flows. If you overlook any type, you risk exposing sensitive information.

Genai security does not stop at protecting data. You must also secure the prompts, outputs, and even the feedback that ai systems use. Attackers can target any part of the process. You need a complete approach to generative ai data security to keep your business safe.

You see that genai security is not just a technical issue. It affects your entire organization. Every department that uses ai systems must follow best practices to protect data and models. You play a key role in building a secure future for your company.

You face a new landscape of cyber threats in 2025. Attackers now target ai systems with advanced tactics. Genai security must address these new threats to generative ai. You see risks like data leakage, prompt injection, and model theft. These security risks can expose sensitive business data or even allow attackers to manipulate your ai systems.

You must also watch for shadow IT. Employees sometimes use unauthorized ai systems, which can lead to uncontrolled data access. This makes it hard to track where your data goes. Genai security helps you control these risks by monitoring all ai systems in your organization.

Cyber threats have grown more complex. Attackers use deepfakes and phishing to trick ai systems. You need to protect your data security at every step. Genai security gives you tools to detect and stop these attacks before they cause harm.

The table below shows how security risks in generative ai differ from traditional ai:

| Aspect | Generative AI Risks | Traditional AI Risks |

|---|---|---|

| Data Control and Transparency | Limited visibility into data movement and processing, complicating compliance audits. | More established data handling protocols. |

| Information Governance and Compliance | Concerns over data residency and third-party access, especially in regulated industries. | Generally clearer compliance frameworks. |

| Shadow IT and User Autonomy | Risk of unauthorized tools leading to uncontrolled data access. | More centralized control over tools used. |

| Auditability and Accountability | Lack of standardized auditing for data accessed by AI assistants. | Established auditing practices exist. |

Note: Genai security must adapt quickly. You cannot rely on old methods to protect your ai systems from new threats.

You rely on ai systems to drive business intelligence and decision-making. Genai security protects your data security and keeps your business running smoothly. If you ignore security risks, you risk losing customer trust and facing regulatory penalties.

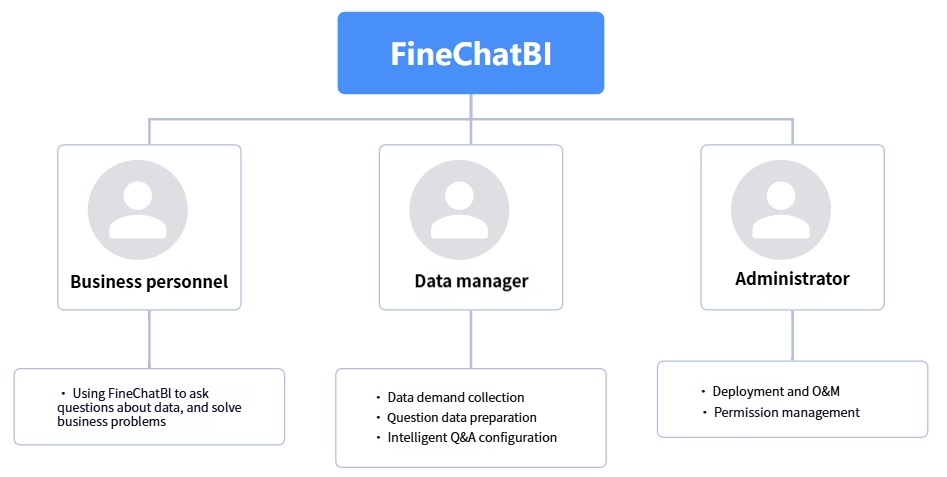

Business intelligence platforms like FanRuan and FineChatBI depend on strong genai security. These platforms process large amounts of sensitive data. You need to ensure that only authorized users can access this information. Genai security helps you manage user permissions and monitor data flows.

You also need to meet strict compliance rules. Genai security supports your efforts to pass audits and avoid fines. When you secure your ai systems, you protect your reputation and keep your business competitive.

Genai security is not just about technology. You must train your teams and set clear policies. This helps everyone understand their role in protecting your organization from cyber threats.

You face data breaches as one of the most serious genai security risks. Data leakage happens when confidential information escapes from your ai systems. You may see this occur because of integration drift, where outdated connections to APIs or other systems expose data by accident. Sometimes, you include sensitive information like PII in your training data. This can lead to privacy leaks if the model generates outputs that reveal this information.

You also need to watch for overfitting. When your model learns too closely from training data, it may repeat sensitive details. Using third-party ai services can create threat vectors if you feed proprietary data into external models. Prompt oversharing is another risk. Users may enter queries that contain confidential information, causing privacy leakage. Vector-store poisoning and model hallucination can distort search results or generate incorrect data, increasing the risk of data leaks.

Tip: Regularly audit your data sources and training sets to reduce the chance of data breaches.

Prompt injection is a growing genai security threat. Attackers craft malicious queries to trick your ai systems into revealing restricted data. You must monitor user inputs and set strict controls to prevent prompt injection. This threat vector can bypass normal safeguards and expose sensitive business information. You need to educate your team about the risks and encourage careful use of prompts.

Prompt injection does not only cause data breaches. It can also lead to security breaches by allowing attackers to manipulate outputs. You must treat every prompt as a potential risk and use genai security tools to detect suspicious activity.

Model theft is a major concern for organizations using ai systems in business intelligence. If someone steals your model, you lose intellectual property and competitive advantage. You may see compromised operational integrity and increased vulnerabilities. Model theft can open new threat vectors for attackers, leading to more data breaches and security risks.

You must protect your models with strong genai security measures. Use encryption, access controls, and monitoring to keep your models safe. Model theft does not only affect your business. It can also harm your customers and partners by exposing sensitive data.

Note: Genai security is your best defense against security threats like model theft and data leaks.

You need strong security mechanisms to protect your organization’s data in generative AI systems. Genai security starts with a layered approach. You should use several methods together to reduce security risks and keep your information safe.

You should combine these tools to build a strong genai security foundation. When you use encryption protocols and access controls together, you make it much harder for attackers to reach your data. Data masking and prompt filtering add extra layers of data protection. Real-time monitoring lets you respond fast if you notice a problem.

Tip: Review your security mechanisms often. Update your processes as new threats appear to keep your genai security strong.

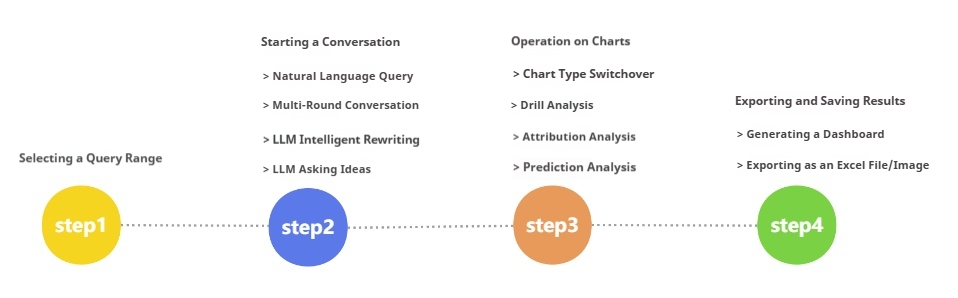

You can use FanRuan and FineChatBI as examples of secure generative AI and business intelligence platforms. These solutions show how you can balance innovation with data protection and compliance.

| Best Practice | Description |

|---|---|

| Data Classification | Classify data by sensitivity and business impact. This step helps you control access better. |

| Access Controls | Set AI-aware policies that limit data handling based on the model’s stage and user roles. |

| Monitoring | Use network detection and monitoring to spot abnormal activity and prevent data exfiltration. |

FineChatBI helps you control user permissions and monitor access. This feature prevents unauthorized data leaks. You can safely export results and share insights without exposing sensitive information. Automated anomaly detection lets you find unusual patterns and take action before a threat grows.

FanRuan supports you in mapping sensitive data flows and setting up strict governance. You can adapt quickly to new regulations and security risks. The platform helps you balance data protection with smart data use, which is key for business intelligence and AI innovation.

You can see these strategies in action with real-world use cases. NTT DATA Taiwan used FanRuan to build a unified data platform. They integrated systems like ERP, POS, and CRM using ETL processes. The platform visualized data for better decision-making. They focused on robust data security to protect sensitive information and reduce the risk of unauthorized access.

If you want to secure your generative AI data, follow these steps:

Note: Genai security is not a one-time task. You need to review and improve your processes as threats change. Use encryption protocols, access controls, and monitoring to keep your data secure.

By following these steps and using platforms like FineChatBI, you can protect your organization from security risks. You will also meet compliance requirements and support your business intelligence goals. Generative ai data security gives you the confidence to use AI for innovation while keeping your information safe.

You must pay close attention to new regulations in 2025. Laws around the world now demand strict controls over how you collect, store, and use data in generative AI systems. If you work for a multinational organization, you face even greater challenges. You need to follow rules from different countries and regions. These rules often require you to prove that your data comes from legal sources and that you have consent to use it.

A table can help you see how these steps support compliance:

| Compliance Step | Why It Matters |

|---|---|

| Data Management | Ensures legal sourcing and consent for AI data |

| Continuous Monitoring | Tracks system performance and detects deviations |

| Employee Training | Keeps staff informed about changing regulations |

Tip: Stay proactive. Review your compliance policies often to avoid penalties and protect your reputation.

You need a solid data governance strategy to keep your generative AI projects safe and reliable. FanRuan helps you build this foundation from the start. You can set up a governance framework early in your AI lifecycle. This guides every step of development and keeps your data secure.

Data governance supports data security and compliance. FanRuan gives you tools to map data flows, set permissions, and monitor activity. You can respond quickly to new regulations and threats. When you use FanRuan, you create a culture of accountability and transparency. This helps your organization succeed with generative AI.

Remember: Good governance is not just about technology. It is about people, processes, and a commitment to responsible AI.

You face new challenges in data security as generative AI evolves. To protect your organization, focus on these key actions:

| Strategy | Description |

|---|---|

| Legal Analysis | Review laws and standards for compliance. |

| Employee Training | Teach staff secure and responsible AI use. |

| Ongoing Monitoring | Regularly check systems for risks and compliance gaps. |

Stay proactive. Strong data security and governance will help you unlock the full value of secure BI platforms.

The Author

Lewis

Senior Data Analyst at FanRuan

Related Articles

What Is a Data Agent? A Practical Beginner’s Guide to How It Works

A data agent is one of the easiest ways to make business data feel more accessible. Instead of opening dashboards, writing SQL, or asking an analyst for help, you can ask a question in plain language and get an answer ba

Saber CHEN

Apr 02, 2026

Create AI Dashboards Instantly Without Coding

Create an ai dashboard instantly without coding. Connect data, analyze, and build dashboards in minutes using AI tools for fast, secure insights.

Lewis

Dec 29, 2025

FineChatBI vs Mercury Labs MLX Dashboard Performance in 2026

Compare FineChatBI and Mercury Labs MLX dashboard performance, speed, features, and integration to choose the best mlx dashboard for your business in 2026.

Saber

Dec 22, 2025